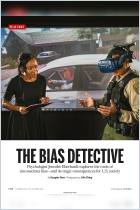

Machine Bias

There’s software used across the country to predict future criminals. And it’s biased against blacks.

Recommendation

Imagine a computer program that can predict if a criminal will strike again. Now, imagine that program isn’t accurate. ProPublica senior reporters and editors poke holes in one of the dozens of risk assessment programs that determine the fate of defendants across the United States. They found that one popular tool incorrectly flagged black defendants as likely reoffenders twice as often as it did for white defendants, exposing some to longer incarceration times. getAbstract recommends this revealing analysis to anyone enthusiastic or skeptical about the use of algorithms in the American judicial system.

Summary

About the Authors

Julia Angwin, Jeff Larson and Lauren Kirchner hold senior reporting and editing positions at ProPublica. Surya Mattu is a contributing researcher.

Comment on this summary